COOPERATION MODEL

ARTIFICIAL INTELLIGENCE

PRODUCT ENGINEERING

DevOps & Cloud

LOW-CODE/NO-CODE DEVELOPMENT

INDUSTRY

FRONTEND DEVELOPMENT

CLOUD DEVELOPMENT

MOBILE APP DEVELOPMENT

LOW CODE/ NO CODE DEVELOPMENT

EMERGING TECHNOLOGIES

ChatGPT hit 100 million users in two months. Midjourney started generating images that fooled art critics. GitHub Copilot began writing production-ready code. If you've been trying to make sense of what's behind all of this, you're not alone, generative AI explained in plain terms is exactly what most technical leaders and founders are searching for right now. The technology has moved from research labs to real business operations faster than almost anyone predicted.

At its core, generative AI is a category of artificial intelligence that creates new content, text, images, code, audio, video, based on patterns learned from existing data. That sounds simple enough, but the underlying mechanics are surprisingly nuanced. Understanding how these models actually work matters, especially if you're evaluating whether to integrate AI into your product or workflow.

At Brilworks, we build AI-powered applications and launch AI MVPs for startups and enterprises alike. That hands-on engineering work gives us a front-row seat to both the capabilities and the limitations of generative AI. We wrote this guide to share that practical perspective with you.

This article breaks down what generative AI is, how the technology works under the hood, where it's being applied across industries, and what real-world examples look like in 2026. Whether you're a CTO exploring an AI integration or a founder scoping out your next product, you'll walk away with a clear, grounded understanding of generative AI and what it can actually do for your business.

Generative AI is a branch of artificial intelligence that produces new content rather than simply analyzing or classifying existing content. When a model writes a product description, generates a realistic image from a text prompt, or autocompletes your code mid-function, that's generative AI at work. The defining characteristic is output creation: the model doesn't retrieve pre-stored answers; it synthesizes something that did not exist before, based on statistical patterns it learned during training.

To keep generative AI explained in terms that hold up under scrutiny, it helps to be precise about the mechanics. A generative model is trained on large datasets of examples, such as text scraped from books and websites, or millions of labeled images, and it learns the underlying patterns, relationships, and structures in that data. When you give it a prompt, the model uses those learned patterns to generate a plausible, contextually relevant output. It is not looking things up in a database; it is predicting what content makes the most sense given your input and everything it learned during training.

The core insight here is that generative AI produces probability-based outputs, which is why the same prompt can return different responses on different runs.

These models operate across multiple content types. A single architecture, like a large language model, can handle text generation, translation, summarization, and question answering all within one system. Other architectures specialize in images, audio, or video. What they share is the same foundational logic: learn patterns from data, then use those patterns to produce new content on demand. The sophistication comes from the scale of the data, the size of the model, and the techniques used during training and fine-tuning.

Generative AI is not the same as traditional AI or rule-based automation, and the distinction matters if you're making technology decisions. A rule-based system follows explicit instructions written by a programmer, such as routing a refund request to a specific form based on keyword detection. It cannot produce anything outside the rules it was given. Generative AI, by contrast, can handle inputs it has never seen before because it learned generalizable patterns rather than fixed decision trees.

It is also not a search engine, even though some products blend the two into a single interface. A search engine retrieves and ranks existing documents from an index. A generative model creates new content, even if it draws on training data to do so. Mixing up these two concepts leads to poor assumptions about reliability. Generative AI can produce confident, well-structured responses that are factually wrong, which is something a traditional search index doesn't typically do. That's not a flaw unique to one product; it's a fundamental property of how these models work.

Finally, generative AI is not a general-purpose intelligence or a system that understands your business the way a human colleague does. By default, it lacks persistent memory between sessions, it cannot take autonomous actions in external systems without additional engineering, and it has no awareness of your proprietary data unless you explicitly provide it through an integration. Understanding these boundaries is not pessimism about the technology; it is the starting point for making sound product and architecture decisions when you move from exploration to actual deployment.

Generative AI has existed in research form for decades, but the version that matters for your business decisions arrived within the last few years. Three things converged simultaneously: model quality crossed a practical usability threshold, compute costs dropped sharply, and cloud providers made powerful models accessible through straightforward APIs. That combination moved generative AI from a research curiosity to a tool your engineering team can integrate into production systems without needing a dedicated machine learning research department.

Before 2022, generating coherent, contextually aware text or photorealistic images required massive in-house infrastructure and specialized ML teams. That changed when foundation models scaled to hundreds of billions of parameters, producing outputs reliable enough for real workflows. The release of models like GPT-3 and then GPT-4 showed that a single pre-trained model could handle tasks across domains, removing the need to build and train specialized models from scratch for every use case.

That shift lowered the barrier to entry from "build a research team" to "call an API," which is why adoption accelerated so quickly across industries.

That architectural breakthrough also changed how software teams think about capability development. Instead of training a custom model for each narrow task, you can now fine-tune or prompt a foundation model and get production-ready results in days rather than months. That speed has real implications for how quickly you can prototype, test, and ship AI-powered features.

Your competitors are not waiting to figure this out. Companies across fintech, healthcare, logistics, and e-commerce are already using generative AI to accelerate content production, automate support workflows, generate synthetic test data, and improve developer output. Microsoft, Google, and Amazon Web Services have all embedded generative AI directly into their developer platforms, which means your existing cloud infrastructure likely already supports it with minimal additional setup.

With generative AI explained and widely accessible, the strategic question has shifted from whether to adopt it to how quickly and how responsibly you move. Teams that build a working understanding now will make better architecture decisions, avoid common integration pitfalls, and reach market faster than those who treat it as something to revisit later. The cost of delayed evaluation is no longer theoretical; it shows up in product velocity and hiring decisions made by your competition today.

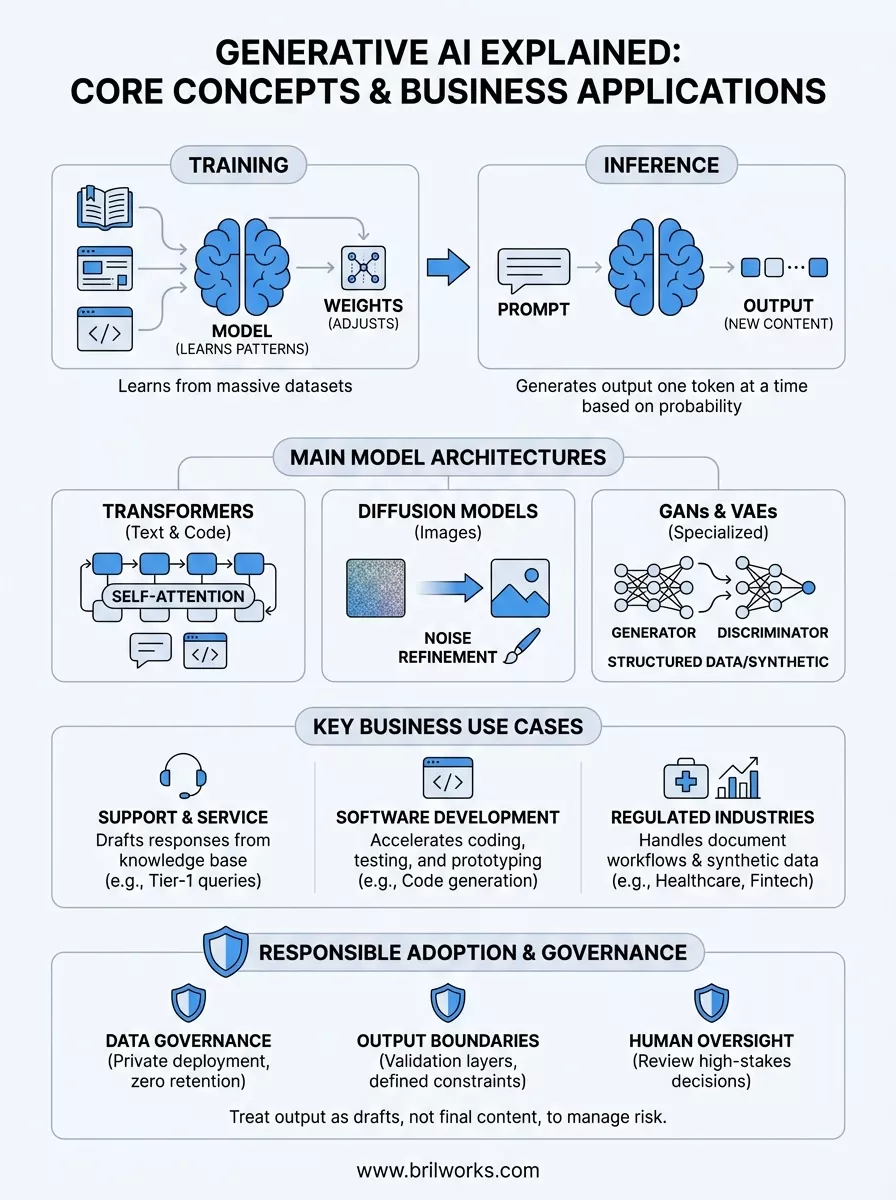

To use generative AI effectively in a product or workflow, you need more than a surface-level description of what it does. You need a working mental model of the process. Generative AI explained properly comes down to two phases: training and inference. Each phase involves distinct mechanics, and understanding both will help you make better decisions about what you can reasonably expect from these systems in production.

Training is the phase where the model develops its internal understanding of language, images, or code by processing enormous datasets. During training, the model receives an input and produces a prediction. It then compares that prediction to the correct answer and adjusts its internal parameters, called weights, to reduce the error. This process repeats across billions of examples until the model's predictions become consistently accurate.

The key thing to understand is that the model never memorizes answers; it learns statistical patterns, which is what allows it to handle inputs it has never seen before.

Modern foundation models are trained on data scraped from books, websites, code repositories, and other large corpora. That scale is why they generalize across so many tasks. A model trained on enough diverse text can write Python, explain medical concepts, and draft a sales email, all from the same learned parameters. Training is computationally expensive and happens once at scale; the resulting model is then distributed for use.

Inference is what happens when you send a prompt and the model responds. The model takes your input, runs it through its learned weights, and generates output one token at a time. A token is roughly a word or word fragment. At each step, the model calculates the probability distribution over all possible next tokens and selects one based on that distribution. This is why output can vary between runs on the same prompt; the selection process involves controlled randomness.

The quality of your prompt has a direct impact on the quality of the output. The model has no access to your intent beyond what you write, so vague instructions produce vague results. Providing clear context, a defined output format, and relevant constraints in your prompt guides the model toward more useful responses. Prompt engineering, the practice of structuring inputs to get better outputs, is now a real skill that directly affects how much value your team extracts from these systems.

Not all generative AI systems work the same way under the hood. Different model architectures handle different content types, and each has distinct strengths and trade-offs. Getting generative AI explained at this level gives you a clearer picture of why certain tools suit specific tasks, and it helps you have more informed conversations with your engineering team when evaluating what to build or integrate.

The transformer architecture is the foundation of most modern text-based generative AI, including ChatGPT, Claude, and Gemini. Transformers use a mechanism called self-attention, which allows the model to weigh the relevance of every word in a sequence against every other word simultaneously. That parallel processing capability is what makes transformers so effective at understanding and generating long, coherent text.

The attention mechanism is why large language models can maintain context across thousands of tokens, something earlier architectures like recurrent neural networks struggled to do reliably.

Large language models built on transformers are pre-trained on vast text corpora, then fine-tuned for specific tasks or aligned to follow instructions. When you prompt a model like GPT-4, you are interacting with a system that has learned the statistical structure of language at enormous scale, which is why it handles such a wide range of tasks from a single set of weights.

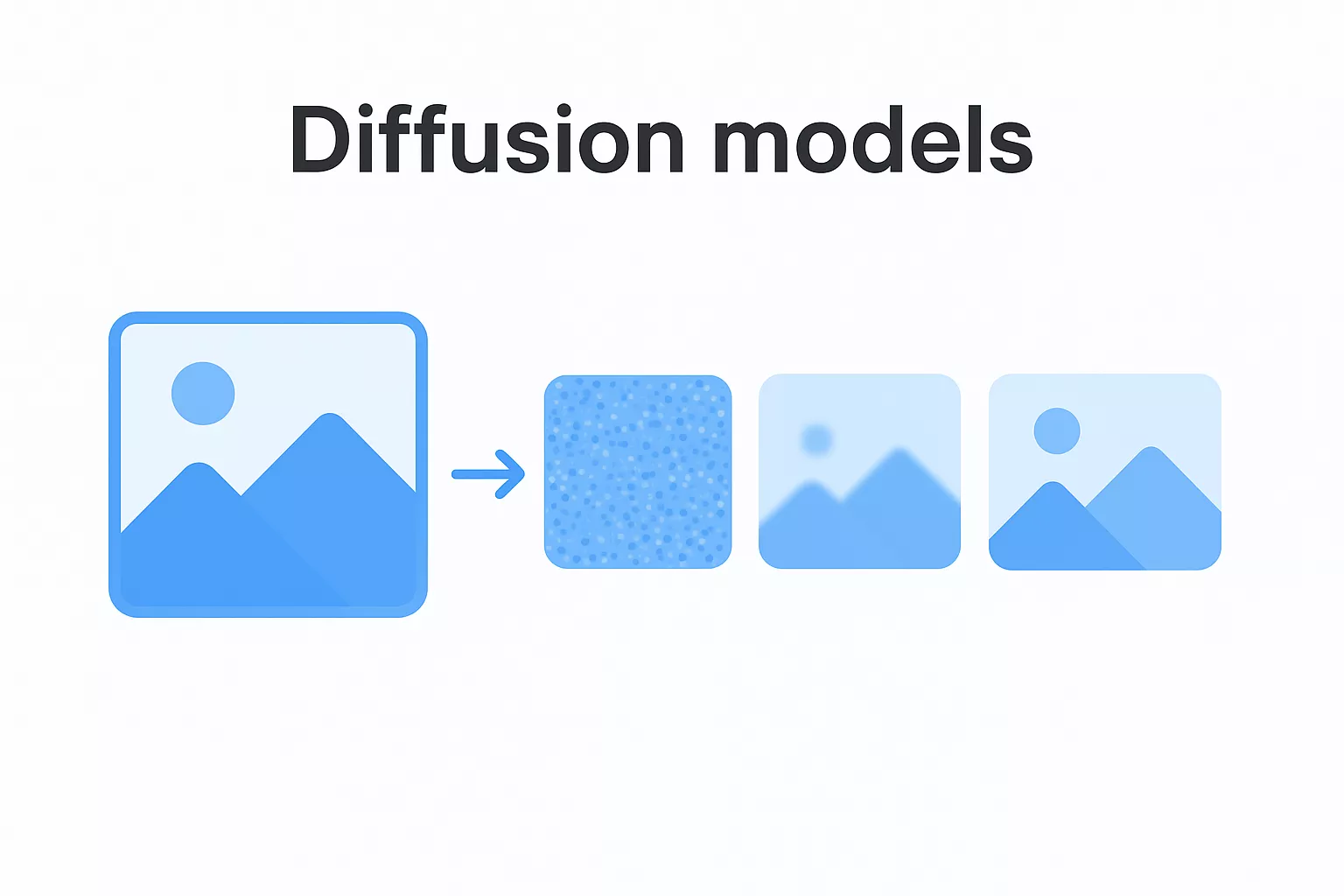

Diffusion models are the architecture behind most modern image generation systems. The training process works by gradually adding noise to an image until it becomes unrecognizable, then teaching the model to reverse that process by removing noise step by step to reconstruct a coherent image. At inference time, the model starts from pure noise and progressively refines it into a realistic output guided by your text prompt.

This approach produces high-quality, detailed images because the iterative refinement process allows the model to make increasingly precise adjustments at each stage rather than generating the full image in a single pass. That step-by-step structure also makes diffusion models easier to guide with conditioning signals like text descriptions or reference images.

Before diffusion models became dominant, generative adversarial networks, or GANs, led image generation. GANs train two networks simultaneously: a generator that creates content and a discriminator that tries to identify whether the content is real or synthetic. The generator improves by learning to fool the discriminator, which pushes both networks toward better performance over time.

Variational autoencoders work differently by encoding inputs into a compressed latent space and decoding that representation into new outputs. That structure makes them particularly useful for generating structured data, creating smooth interpolations between two examples, and powering anomaly detection systems in industries like fintech and healthcare.

Once you have generative AI explained at the architectural level, the next practical question is what types of outputs these systems actually produce. The answer is broader than most people initially assume, and the range of content types matters because it directly shapes which business problems you can realistically solve with these tools.

Text is where most people first encounter generative AI, and for good reason. Large language models can produce coherent long-form content such as reports, marketing copy, product documentation, and customer communications. They can also summarize lengthy documents, translate between languages, and answer complex questions with structured reasoning. For engineering teams specifically, code generation is one of the highest-value applications available right now. Models like GitHub Copilot and GPT-4 can write functions, suggest refactors, explain existing code, and generate unit tests, often cutting the time developers spend on repetitive implementation tasks by a measurable margin.

Code generation works best when you treat the model as a fast, knowledgeable collaborator rather than an autonomous developer; your review and judgment remain essential.

Beyond text, generative AI can produce photorealistic images, stylized illustrations, and design assets from plain text prompts. Diffusion models handle this domain, and the output quality has reached a level where product teams use generated imagery in real marketing workflows and UI prototypes. On the audio side, models can synthesize realistic speech in multiple languages and voice profiles, generate background music, and clone voice characteristics for dubbing and accessibility applications. Video generation is newer but advancing quickly, with models now capable of producing short clips from text descriptions or extending existing footage with consistent visual style.

Generative AI can also produce structured outputs that go beyond natural language. Models can generate synthetic datasets that mimic the statistical properties of real data, which is valuable when your team needs training data or test data without exposing sensitive customer records. They can produce formatted outputs like JSON, tables, and database queries when prompted with the right context and constraints. This capability is particularly relevant in fintech and healthcare, where teams need to work with realistic data during development without running into privacy and compliance barriers that come with using production datasets directly.

Getting generative AI explained in theory is useful, but seeing it applied to real business workflows is what helps you evaluate where it fits in your own operations. Across industries, companies are deploying generative AI not as a novelty but as a direct component of production systems that handle real volume and generate measurable output. The examples below show what that looks like in practice.

Companies in e-commerce and SaaS are using large language models to handle tier-one support queries at scale. Instead of routing every inbound message to a human agent, the model reads the customer's message, pulls relevant context from a knowledge base, and drafts a response that a human can review or send directly. Teams that implement this well report significant reductions in average handling time without a corresponding drop in customer satisfaction scores. The model doesn't replace your support staff; it removes the repetitive drafting work so your agents can focus on complex, high-value interactions.

The highest-impact deployments pair the generative model with a well-maintained internal knowledge base, because output quality depends directly on the quality of the context you feed in.

Engineering teams are using code generation tools to accelerate implementation across the full development cycle. A developer can describe a function in plain language and receive a working draft, ask the model to write unit tests for an existing module, or get a plain-language explanation of unfamiliar code during a review. Beyond individual productivity, product teams use AI-assisted prototyping to build and test UI concepts in hours rather than days, which shortens the feedback loop between design and engineering significantly.

In regulated industries, generative AI is handling document-intensive workflows that previously required significant manual effort. Healthcare providers use it to draft clinical notes from recorded consultations, with clinicians reviewing for accuracy rather than writing from scratch. Fintech teams generate synthetic transaction datasets for model training and compliance testing without exposing real customer records. In media and marketing, content teams use generative AI to produce first drafts, localize copy across languages, and generate product descriptions at the scale that large catalogs require. Each of these use cases follows the same pattern: the model handles high-volume, structured output, and your team applies judgment where it matters most.

With generative AI explained and the use cases clear, the next question is how to move forward without introducing serious risks into your operations. Responsible adoption is not about slowing down; it's about building systems that your team, your customers, and your stakeholders can rely on. Organizations that get this right treat governance and technical architecture as the same conversation, not separate workstreams that meet only at the end of a project.

Before you connect any generative AI system to your internal data, you need to define what data the model can access and what it cannot. Sensitive customer records, proprietary business logic, and personally identifiable information all require explicit controls. Many API-based models, including those offered through Microsoft Azure and AWS, provide options for private deployment or zero data retention, which means your inputs are not used to retrain the underlying model. Review those settings before you write a single line of integration code.

Your data governance policy should also specify who inside your organization can submit what types of data to external AI services. A clear internal policy prevents well-meaning employees from accidentally sending confidential documents to a public API endpoint, which is a real and underestimated risk in fast-moving development environments.

Generative AI produces probabilistic outputs, which means it can generate content that sounds confident but is factually incorrect or contextually inappropriate. You reduce that risk by defining output boundaries in your system prompts and building validation layers that check responses before they reach end users. In customer-facing applications, this matters most; a model that generates an incorrect refund policy or a misleading product claim creates real liability that your legal and compliance teams will need to address.

The most reliable production deployments treat model outputs as drafts that pass through a defined review or filtering step, not as final content that ships automatically.

No matter how capable the model is, your team should retain meaningful decision-making authority over any output that affects customers, finances, or compliance obligations. Design your workflows so that a human reviews or approves high-stakes outputs rather than letting the model act autonomously end-to-end. This is not a limitation you work around; it is a sound engineering principle that keeps your systems accountable and auditable as the technology continues to evolve.

You now have generative AI explained across every layer that matters for real business decisions: what it is, how it works, what models drive it, what it produces, where it's already being applied, and how to adopt it without introducing unnecessary risk. That foundation gives you something concrete to work with when your team starts evaluating specific tools, scoping integration projects, or pitching AI-powered features to stakeholders.

The gap between understanding generative AI and deploying it in a production system is where most teams slow down. Scoping the right use case, choosing the right architecture, and building the right guardrails all require engineering judgment that goes beyond reading a guide. If you're ready to move from exploration to execution, our team at Brilworks builds and ships AI-powered applications for startups and enterprises across fintech, healthcare, and logistics. Talk to our AI development team to discuss what you're building and where generative AI fits into your roadmap.

Generative AI Explained is understanding AI systems that create new, original content including text, images, audio, video, and code based on patterns learned from vast datasets. When Generative AI Explained simply, it refers to machine learning models that generate human-like outputs rather than just analyzing or classifying existing data, transforming how we create content and solve problems.

Generative AI Explained technically involves neural networks trained on massive datasets to learn patterns, structures, and relationships in data. When Generative AI Explained in depth, models use architectures like transformers, GANs (Generative Adversarial Networks), or diffusion models to generate new content by predicting what should come next based on learned patterns, context, and user prompts.

When different types of Generative AI Explained, the main categories include Large Language Models (LLMs) like GPT and Claude for text, image generators like DALL-E and Midjourney, audio synthesis models for music and voice, video generation AI, and code generation tools. Each type of Generative AI Explained serves different creative and practical applications.

Common applications when Generative AI Explained include content creation (articles, marketing copy), software development (code generation, debugging), design work (images, logos, UI mockups), customer service (chatbots), data analysis and reporting, personalized recommendations, virtual assistants, creative writing, video production, and business process automation.

The difference when Generative AI Explained versus traditional AI is that traditional AI analyzes, classifies, or makes predictions based on existing data, while Generative AI creates entirely new content. When this distinction in Generative AI Explained, traditional AI might identify a cat in a photo, whereas Generative AI can create a completely new, original cat image.

Get In Touch

Contact us for your software development requirements

Get In Touch

Contact us for your software development requirements