The terms get thrown around almost interchangeably, but generative AI vs machine learning refers to two fundamentally different capabilities. One predicts outcomes from data. The other creates entirely new content, code, images, and text that never existed before. If you're a technical leader evaluating where to invest, understanding this distinction matters more than any buzzword.

Machine learning has been the workhorse behind recommendation engines, fraud detection, and predictive analytics for years. Generative AI, built on top of machine learning foundations, flipped the script by shifting from analysis to creation. Both fall under the artificial intelligence umbrella, but they solve very different problems and demand different implementation strategies, from model selection to infrastructure and data pipelines.

At Brilworks, we build AI-powered products for startups and enterprises, and the most common question we hear from CTOs and founders is some version of: "Which one do I actually need?" The answer depends on your use case, your data, and what you're trying to achieve. This article breaks down the technical differences, the hierarchy between these two concepts, and real-world use cases so you can make that call with clarity.

When you're building a product, the technology you choose shapes everything downstream: your data requirements, your infrastructure costs, your team's skill set, and how long it takes to ship something useful. The generative AI vs machine learning decision isn't a detail you can defer until later in the development cycle. Making that choice early determines whether you're solving the right problem with the right tool, or spending months building the wrong thing with precision.

Product teams that conflate generative AI with machine learning often end up over-engineering their solutions. If you need to predict customer churn or flag fraudulent transactions, you don't need a large language model. A well-tuned classification model does that job faster, at a fraction of the cost, and with far less data preparation overhead. Deploying a generative AI system when a simpler ML model suffices inflates your cloud compute bill and adds unnecessary complexity to your architecture.

The inverse is equally costly: using a standard ML model for a task that demands content generation, like building a document assistant or a code copilot, produces a system that simply cannot do the job no matter how much you optimize it.

The gap also shows up in production timelines. Generative AI models require significant prompt engineering, safety testing, and often fine-tuning on domain-specific data before they behave reliably inside a real product. Traditional ML pipelines, by contrast, follow more predictable development patterns that most engineering teams already understand. If your team underestimates that complexity gap, you'll burn sprint cycles realigning scope and resetting stakeholder expectations.

The two approaches have fundamentally different data requirements, and that affects how you structure your pipelines from day one. Machine learning models typically train on structured, labeled datasets, so your data collection and labeling strategy needs to align directly with the prediction task you're solving. You need clean, consistent historical data, and the quality of that data directly determines model accuracy. Getting that pipeline wrong early means retraining from scratch later.

Generative AI introduces a separate challenge entirely. Many teams use pre-trained foundation models from providers like Google or Microsoft and adapt them through fine-tuning or retrieval-augmented generation rather than training from scratch. That shifts your data strategy toward curating high-quality context data, managing vector embeddings, and building reliable retrieval systems. Both approaches demand rigor, but the shape of that rigor is completely different, and conflating them leads to a data architecture that serves neither use case well in production.

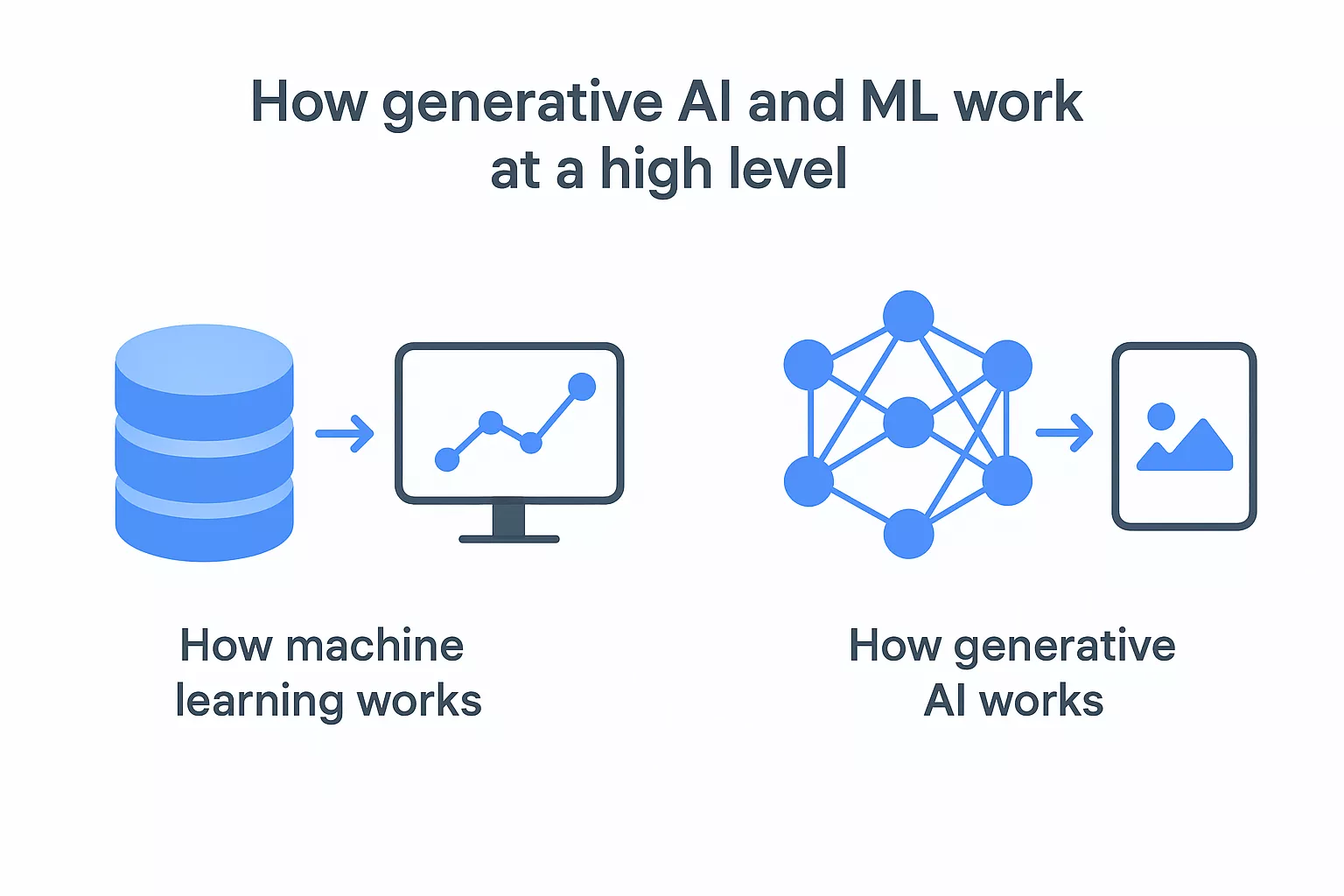

Both approaches use data to learn patterns, but what they do with those patterns is where generative AI vs machine learning splits into two distinct capabilities. Traditional machine learning models analyze input data and return a prediction, classification, or ranked score. Generative AI models use that same foundational learning mechanism but produce entirely new outputs, such as text, images, code, or audio, that didn't exist in the training set.

A traditional ML model trains on labeled historical data to identify statistical relationships between inputs and outputs. During training, the model adjusts its internal parameters to minimize prediction error across that dataset. Once you deploy it, it takes new input and returns a specific answer within the boundaries of what it was trained to predict, nothing more, nothing less.

The core mechanic is optimization: the model keeps adjusting its parameters until its predictions align closely enough with known labels to be reliable in production.

Most ML workflows rely on structured, tabular data and tightly scoped problem definitions. You're teaching the model to answer one specific question repeatedly, such as "Will this transaction be fraudulent?" or "Which product will this user click next?"

Generative AI models, most commonly large language models or diffusion models, train on massive unstructured datasets using self-supervised learning objectives. Rather than predicting a fixed label, the model learns to predict the next token, pixel, or audio frame in a sequence. Through this process, the model builds a compressed internal representation of patterns, context, and relationships drawn from the training data.

When you prompt a generative model, it samples from that learned distribution to produce output that is contextually coherent and novel. Google DeepMind has scaled this architecture to support models capable of reasoning, summarization, and multi-step code generation across diverse domains.

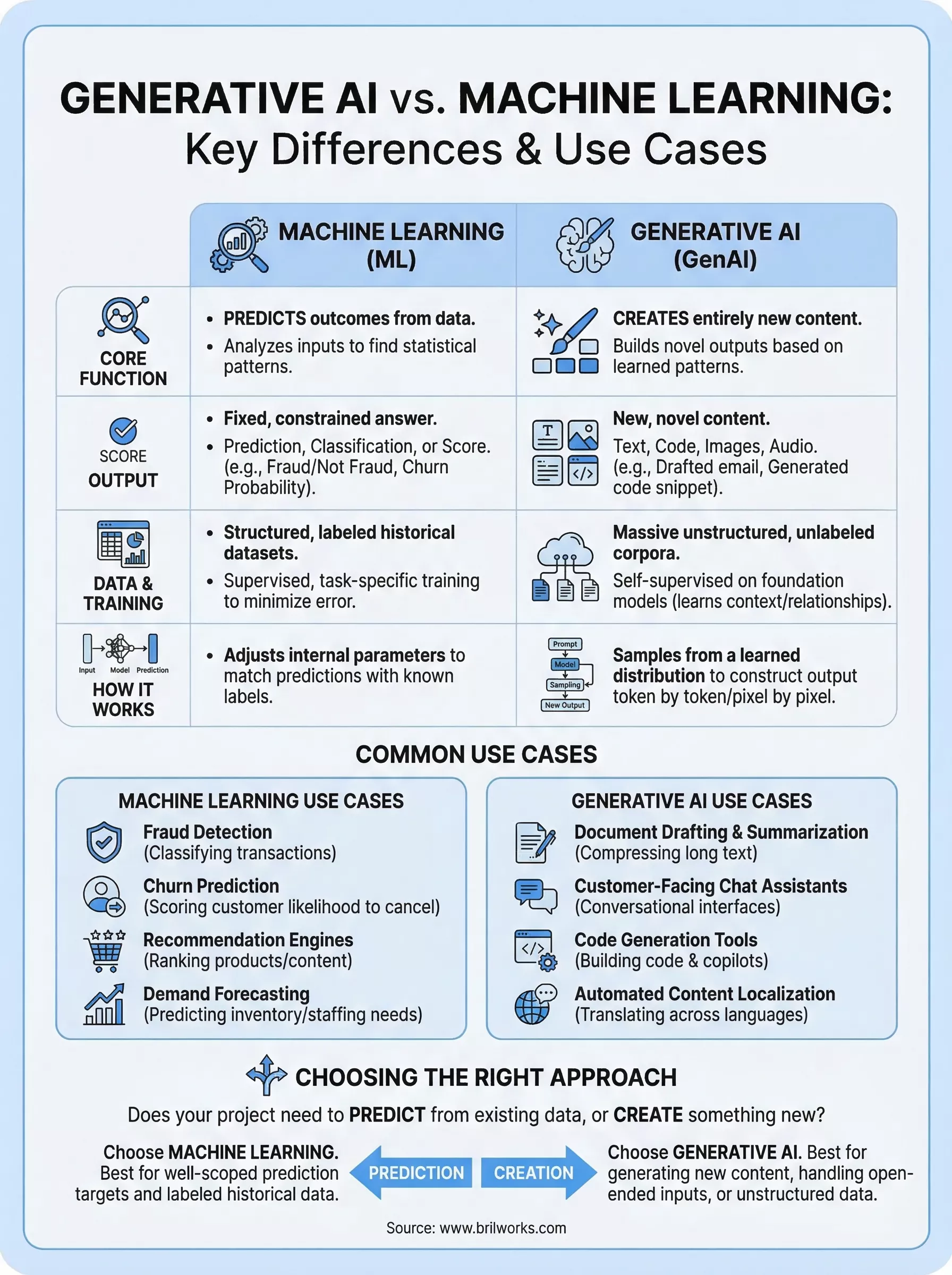

Understanding generative AI vs machine learning at a structural level comes down to three dimensions: output type, data requirements, and training approach. Each one reflects a fundamental difference in what these systems are designed to do, not just how they're implemented.

| Dimension | Machine Learning | Generative AI |

|---|---|---|

| Output | Prediction, score, or label | New text, code, images, or audio |

| Data | Structured, labeled datasets | Unstructured, unlabeled corpora |

| Training | Supervised or task-specific | Self-supervised on foundation models |

A traditional ML model returns a constrained, predefined answer: a probability score, a label, or a ranked result. The output space is fixed at design time. You define what the model can produce before you ever write a line of training code.

Generative models operate under completely different constraints. Instead of selecting from a fixed set of outcomes, they sample from a learned distribution to produce novel content. The output isn't retrieved from training data; it's constructed token by token, pixel by pixel, based on patterns the model internalized during training.

This is why you cannot reframe a classification model as a generative one by tuning it harder. The architectures themselves solve fundamentally different problems.

Traditional ML pipelines depend on labeled, structured data where every training example carries a known outcome. You build your model around a specific prediction task, and your data collection strategy follows directly from that task definition. Annotation quality directly determines how accurate your model becomes in production.

Generative AI models train on massive unstructured corpora using self-supervised objectives, which means they learn without manually labeled examples. Most product teams skip training from scratch entirely and fine-tune pre-trained foundation models, adapting them to specific domains through curated context data and retrieval-augmented generation rather than raw compute investment.

Knowing the theory behind generative AI vs machine learning only takes you so far. What actually clarifies the choice is seeing where each approach performs in production, across real product categories and business problems your team is likely building toward.

Machine learning is the right tool when your problem has a clearly defined prediction target and enough historical data to train against. These are the use cases where it consistently delivers reliable, production-ready performance:

Each of these tasks shares a common structure: a fixed output type and a large set of labeled historical examples to drive training.

Generative AI belongs in your stack when the output itself is the product. If your users need something new constructed, not just a prediction returned, this is where generative models justify their added complexity.

Generative AI doesn't retrieve answers from a database; it constructs them, which is what makes it the right fit for tasks where no fixed answer exists in advance.

Common generative AI applications include document drafting and summarization, customer-facing chat assistants, code generation tools, and automated content localization across languages. In healthcare and legal tech, teams use it to compress long documents into actionable summaries without manual review. Enterprises integrating with Microsoft Azure OpenAI Service frequently apply these models to internal knowledge bases, letting employees query proprietary documentation in plain language rather than navigating folder hierarchies.

When you evaluate generative AI vs machine learning for a specific project, the clearest signal comes from your output requirement. Ask yourself one question: does your project need to predict something from existing data, or does it need to produce something new? If the answer is prediction, classification, or scoring, machine learning is the right fit. If the answer involves generating text, code, images, or structured summaries that don't exist yet, generative AI is what you need.

Your problem definition drives every downstream decision, from data collection to model architecture to deployment infrastructure. Machine learning works best when you have a well-scoped prediction target and a labeled historical dataset to train against. If your use case has a fixed output space, such as "is this transaction fraudulent or not," traditional ML gives you a faster, cheaper, and more predictable path to production.

If your problem definition keeps expanding in scope or requires the system to handle open-ended inputs, that's a strong signal you need generative AI rather than a prediction model.

Generative AI projects demand a different skill set than traditional ML work. Your team needs familiarity with prompt engineering, vector databases, and retrieval-augmented generation pipelines. Microsoft's Azure AI documentation covers the infrastructure requirements in detail. Traditional ML projects follow more established patterns that most data engineering teams can execute without a steep ramp-up period.

Data availability also shapes the decision. If you hold large volumes of structured, labeled historical data, machine learning gives you a direct path to a working model. If your data is unstructured, sparse, or domain-specific, fine-tuning a pre-trained foundation model is often the faster and more cost-effective route than building a supervised pipeline from scratch.

The generative AI vs machine learning decision comes down to one practical question: does your project need to predict something from existing data, or create something entirely new? Machine learning handles prediction, classification, and scoring with speed and lower infrastructure cost. Generative AI handles construction, whether that's text, code, or structured summaries your users need generated on demand.

Both technologies sit under the same AI umbrella, but they solve fundamentally different problems and demand different data strategies, infrastructure, and team skill sets. Choosing the right approach early protects your timeline and budget from costly realignment later in the development cycle. Start with your output requirement, let that decision drive your architecture, and validate your assumptions before you scale.

If you're working through which approach fits your product, or you need a technical partner to build it correctly, connect with the team at Brilworks to scope and ship your next AI-powered product.

The key difference in Generative AI vs Machine Learning is that Machine Learning is a broad field encompassing algorithms that learn from data to make predictions or decisions, while Generative AI is a specific subset of Machine Learning focused on creating new, original content. In the Generative AI vs Machine Learning comparison, traditional Machine Learning analyzes and classifies existing data, whereas Generative AI generates entirely new text, images, code, audio, or video based on learned patterns.

Yes, when comparing Generative AI vs Machine Learning, Generative AI is actually a specialized branch within Machine Learning. Machine Learning is the umbrella term that includes supervised learning, unsupervised learning, reinforcement learning, and deep learning, with Generative AI falling under deep learning. Understanding Generative AI vs Machine Learning means recognizing that Generative AI uses advanced Machine Learning techniques like neural networks and transformers to create new content.

Use cases differ significantly in Generative AI vs Machine Learning applications. Traditional Machine Learning excels at prediction (fraud detection, recommendation systems), classification (spam filtering, medical diagnosis), and optimization (supply chain, pricing). Generative AI vs Machine Learning use cases show Generative AI shines in content creation (writing, image generation), code development, virtual assistants, design automation, and creative applications. The Generative AI vs Machine Learning decision depends on whether you need to analyze/predict or create/generate.

The choice in Generative AI vs Machine Learning depends on your business needs. Choose traditional Machine Learning when you need to analyze data, make predictions, detect patterns, or automate decision-making based on historical data. Select Generative AI when you need to create content, automate creative tasks, generate personalized experiences, or build conversational interfaces. Most businesses benefit from both in the Generative AI vs Machine Learning decision, using each for appropriate use cases.

Technical differences in Generative AI vs Machine Learning include model architecture, training approaches, and outputs. Traditional Machine Learning uses algorithms like decision trees, random forests, and support vector machines with structured data, while Generative AI employs complex neural networks like transformers, GANs, and diffusion models trained on massive unstructured datasets. In Generative AI vs Machine Learning comparisons, Generative AI requires significantly more computational resources, larger training datasets, and produces creative outputs rather than predictions or classifications.

Get In Touch

Contact us for your software development requirements

You might also like

Get In Touch

Contact us for your software development requirements