When you're building an AI-powered product, one of the first architectural decisions you'll face is how to make a large language model actually useful for your specific domain. The two leading approaches, LLM fine tuning vs RAG, solve this problem in fundamentally different ways, and picking the wrong one can cost you months of development time and tens of thousands of dollars in compute.

Fine-tuning reshapes a model's internal knowledge by training it on your data. Retrieval-Augmented Generation (RAG) keeps the base model intact and instead feeds it relevant external information at query time. Both have clear strengths, both have real limitations, and the right choice depends on your data, your use case, and how you plan to scale.

At Brilworks, we help companies build and ship AI-driven applications, from rapid MVPs to production-grade systems. We've implemented both fine-tuning and RAG pipelines across industries like fintech, healthcare, and logistics, so we've seen firsthand where each approach shines and where it falls short.

So you want to know about fine-tuning and RAG - what are the main differences between them? Let's break it down. Fine-tuning and RAG are two approaches that can be used in different projects, but they have some key differences. We'll go over the pros and cons of each, and then look at some specific use cases to help you decide which one is best for your project. If you're getting ready to start building something, this comparison will give you the information you need to make a decision before you even start writing code. You'll be able to choose the approach that fits your project best, and that's really important. By understanding the differences between fine-tuning and RAG, you'll be able to make an informed decision and get started on the right foot.

The question comes up early in almost every AI project. Your team has selected a base model, you have domain-specific data, and now you need to decide how to make the model actually useful for your use case. Both RAG and fine-tuning promise to get you there, but they operate at fundamentally different layers of the stack, which means the wrong choice ripples through your architecture, your budget, and your timeline before you notice the problem.

Deciding between these two approaches is a big deal. It's not just a small change you can make easily. If you want to fine-tune a model, you need to spend a lot of time and resources on it. You need powerful computers, special datasets, and you have to test it many times before it's good enough to use. But if you spend three months doing this and then realize that the information you're working with changes every week, you'll be stuck having to update it all the time. This will slow down your progress and make it hard to get anything done. On the other hand, if you build a RAG pipeline for a task that needs the model to really understand complex ideas, you'll have trouble getting good results and you might even get false information. You'll keep running into problems and it will be hard to fix them completely.

The wrong architectural choice at the start of an AI project is one of the most expensive forms of technical debt a team can accumulate.

Both failure modes are common, and both stem from the same root cause: teams jump into implementation before clearly defining what the model needs to do and why.

When engineers and product teams look at the llm fine tuning vs rag decision, they're typically trying to solve one of three concrete problems. First, they want the model to know things it was never trained on, like your internal product documentation, proprietary research, or recent events. Second, they want the model to respond in a specific format, tone, or domain style consistently. Third, they want to reduce hallucinations and get answers grounded in verified sources rather than the model's training data.

When it comes to tackling complex problems, there are a few different approaches that can be taken. For some issues, RAG is a good fit, particularly when dealing with the first and third problems. On the other hand, fine-tuning is often better suited for the second problem. The thing is, a lot of real-world applications require a combination of all three approaches, which can make it tough for teams to figure out where one ends and the other begins. This can lead to a cycle of comparison, as teams try to determine the best way to move forward. Ultimately, finding the right balance between these different approaches is key to making progress and achieving success.

Each goal also carries very different data and infrastructure requirements. Knowing which goal dominates your use case is the fastest way to cut through the confusion and start making concrete architectural decisions.

The comparison gets harder when you factor in scale, latency, and long-term maintenance. A RAG pipeline that works well at low query volumes can become expensive and slow under production load if you haven't accounted for vector database costs and retrieval latency at scale. A fine-tuned model that performs well on your original task may degrade as your domain evolves and requires full or partial retraining to stay current.

There's another thing to think about - the gap in skills. To build and evaluate a model that's really good, you need to know a lot about machine learning, and many teams that make products don't have that expertise in-house. This means they have to spend money to hire people or rely on outside experts. On the other hand, RAG pipelines are easier for engineers to use, even if they only know about standard software development. But to make them work well in production, you still need to plan them carefully.

It's really important to understand the pros and cons of each option before making a decision. That's why comparing them is so useful. The rest of this article will explain how each approach works, their strengths and weaknesses, and what happens when you combine them to get the best results. This way, you can figure out what works best for you, even if one option isn't enough on its own.

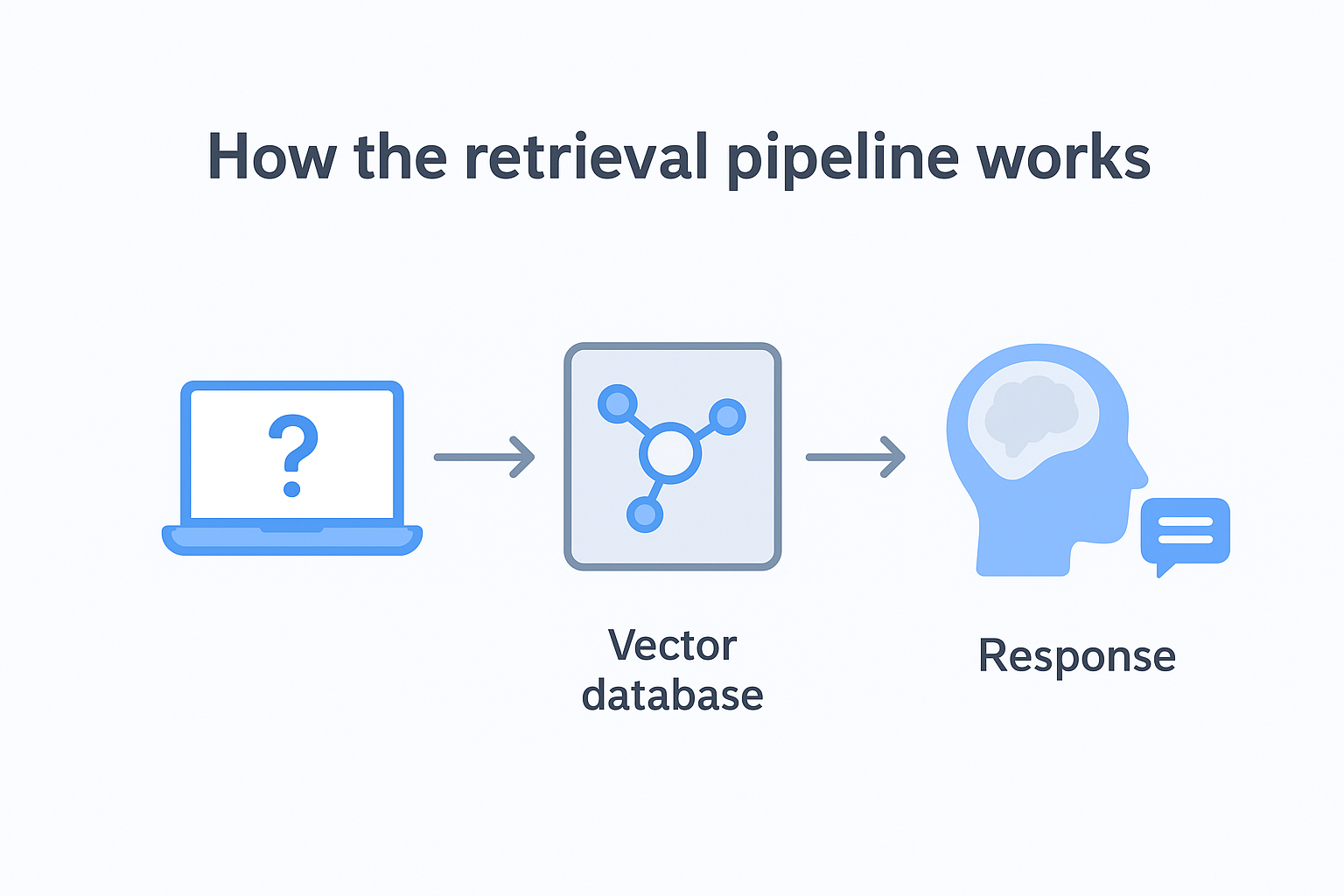

Retrieval-Augmented Generation is an architecture that connects a language model to an external knowledge source at the moment a query arrives. Instead of relying entirely on what the model learned during pretraining, RAG pulls relevant documents or data chunks from a separate store and injects them directly into the prompt before the model ever generates a word. The model then produces its response using both its built-in reasoning capabilities and the retrieved context you supply, which means the output reflects your actual data rather than the model's general training knowledge.

When a user submits a query, the RAG system converts it into a vector embedding and searches a vector database for the most semantically similar content. It finds the most similar pieces and adds them to the conversation, along with the original question. The model then reads all of this, thinks about it, and comes up with an answer based on the information it found, rather than just relying on what it already knew. This way, the answer is more accurate and relevant to what the person is asking. The model is able to reason and understand the context of the question, and provide a response that is grounded in the actual information that is available, rather than just making something up. This makes the whole process more reliable and trustworthy.

RAG does not change what the model knows internally; it changes what the model can see at the moment it answers.

The pipeline has three core components working in sequence: an embedding model that converts your text into numerical vectors, a vector store that indexes and retrieves those vectors based on semantic similarity, and the base LLM that generates the final response. Each component can be swapped or upgraded independently, which gives your team real flexibility as your data volume grows or your retrieval accuracy requirements become more demanding.

One big advantage of using RAG over fine-tuning a large language model is that it's really easy to update your knowledge base. If your company's internal documents change or you release new product guidelines, all you have to do is re-index the updated content in the vector store. Then, the system will automatically reflect those changes the next time someone asks a question. The best part is, you don't have to retrain the model, which saves a lot of time and money. You also don't have to worry about coordinating a new deployment across your entire infrastructure. This makes it a lot simpler to keep your system up-to-date and running smoothly.

Fine-tuning takes a pretrained language model and continues its training on a smaller, task-specific dataset that you provide. Since the model gets its answers straight from the documents it finds, you can easily see which sources were used for each response. This is really important in areas like healthcare and finance, where you need to be able to track how AI decisions are made. It's not just a good thing to have, it's something you have to do to follow the rules. Building this ability into the system from the beginning is much cheaper than trying to add it later, after everything is already up and running.

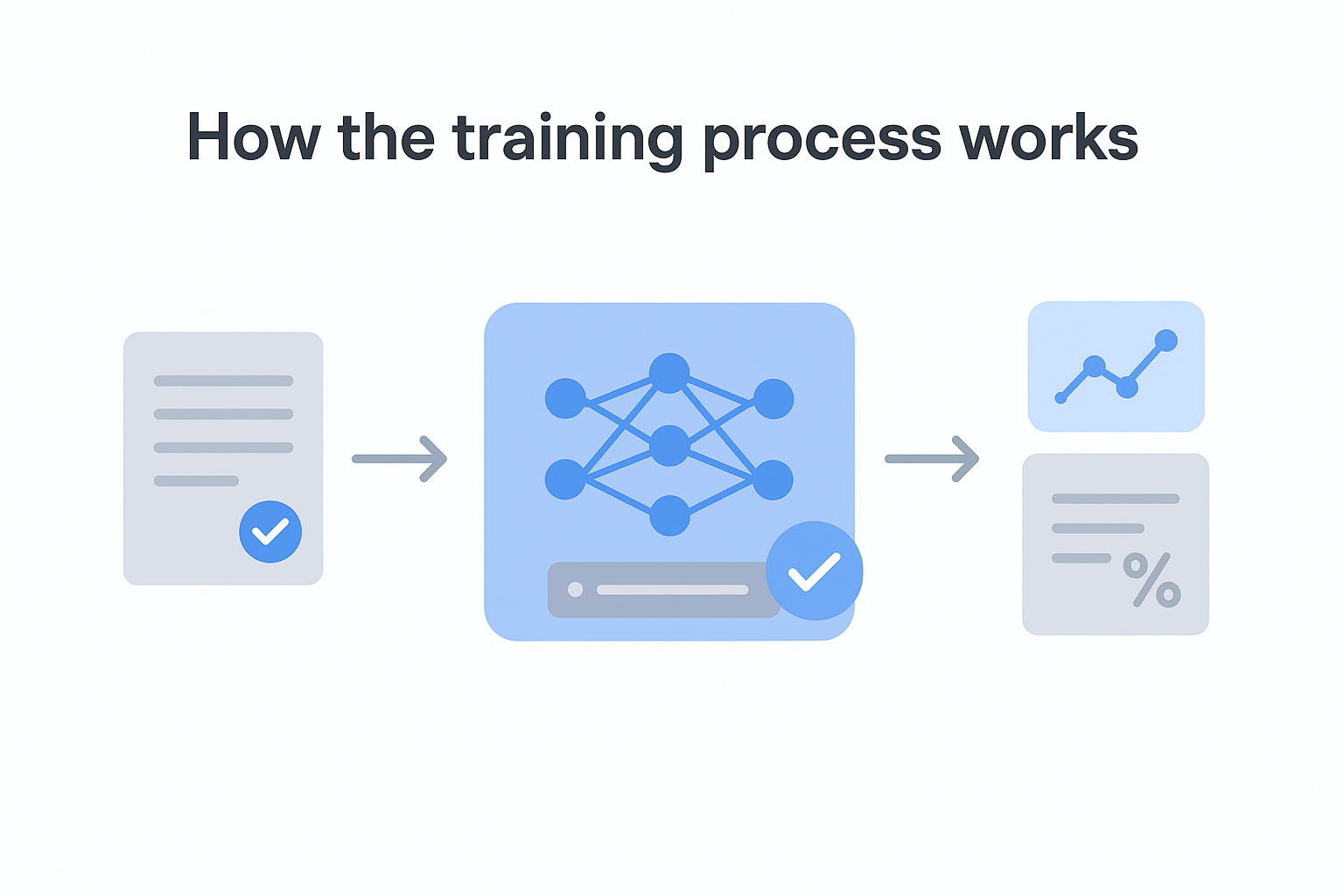

You begin with a basic model, it could be something like Meta's Llama that's open to everyone or a model that's just for your company, which you can access through a special connection and fine-tune to your needs. Next, you get a dataset that's been labeled, which shows the model how you want it to behave. This dataset is usually made up of pairs of inputs and outputs that demonstrate how the model should react in different situations you're targeting. When you train the model, it goes through these examples in groups, checks how its outputs match up with what you expected, and then adjusts the settings to reduce the mistakes over many rounds. This process helps the model learn and get better at giving the right responses.

Fine-tuning does not teach the model to retrieve information; it teaches the model to think and respond in a specific way.

Evaluation runs alongside training so your team can monitor performance metrics and stop the process before the model overfits to your training data and loses its general reasoning ability. Getting that balance right requires careful dataset curation and iteration, which is one of the main reasons fine-tuning demands more ML expertise than most teams initially expect.

Unlike RAG, which leaves the model's internal weights completely untouched, fine-tuning produces a modified version of the model itself. That distinction matters when you think about maintenance and deployment. Your fine-tuned model is a discrete artifact that requires versioning, storage, and a retraining cycle whenever your domain requirements shift significantly.

In the llm fine tuning vs rag comparison, this is where fine-tuning shows its most significant constraint. If your target domain evolves, you cannot just update a document store and call it done. You need fresh training data, another training run, and a new round of evaluation before you can confidently push updates to production. For use cases with stable knowledge requirements, like a model trained to write in your brand's legal tone or to classify support tickets into fixed categories, that rigidity is a reasonable tradeoff for the consistency and quality fine-tuning delivers.

So, what's the main difference between these two approaches? It all comes down to where the model gets its knowledge from. With RAG, the knowledge is stored outside of the model, in a special layer that you can control and update whenever you want. But with fine-tuning, the knowledge is actually built into the model itself, which means that if you want to change what the model knows, you have to retrain it from scratch. This one key difference is what leads to all the other practical differences you'll see when you're using either of these approaches. It's pretty straightforward, really - the model's knowledge is either outside, where you can easily get to it, or it's inside, where it's a lot harder to change.

RAG works by getting information when it's needed, by taking relevant parts from a vector database and giving them to the model to use as context. The model doesn't keep this information forever, it gets it fresh every time it's asked. On the other hand, fine-tuning teaches the model to remember patterns and knowledge by changing its internal settings during training. After training is done, the model can use these patterns without needing any outside help to answer questions in that area. This way, the model can recall what it learned and apply it to new questions, all on its own.

The way each approach deals with new information is really different. When you add new documents to RAG, it updates right away, which makes it perfect for things that change a lot, like weekly or monthly. On the other hand, fine-tuning needs to be completely retrained to include new information, and that can take a long time - sometimes days or even weeks - depending on how big the dataset is and what kind of computing power you have. This is a big difference, and it affects how you can use each approach. For example, if you need to update your knowledge base all the time, RAG might be the better choice. But if you don't need to update as often, fine-tuning might be okay, even if it takes longer. It's all about how often your information changes and how quickly you need to update it.

RAG adds latency at inference time because every query triggers a retrieval step before generation begins. That retrieval overhead is manageable at moderate query volumes, but it compounds quickly at scale if your vector database and embedding pipeline are not optimized. Fine-tuned models respond directly without a retrieval step, which generally produces lower and more predictable latency per query once the model is deployed.

These two methods have some big differences in what they're good at. One is really good at answering questions, summing up documents, and looking up information in sources that change over time. The other is better at tasks that need a consistent style, special ways of thinking, or a specific format for the output, like writing notes for medical records, creating code in a special system, or figuring out what customers want with a high degree of accuracy from a set list of options.

Both approaches have clear strengths on paper, but the numbers that matter most to your team often don't show up in documentation or vendor pricing pages. Understanding the full cost profile of each option in the llm fine tuning vs rag comparison helps you budget accurately before you commit to an architecture, not after you've already burned through a sprint.

One of the best things about RAG is that it always has the most up-to-date information. When you make changes to your documents, they show up right away, without needing to stop and restart anything. This flexibility makes RAG a great choice for teams that work with information that changes a lot, like internal wikis, customer knowledge bases, or rules and regulations that get updated all the time.

The real expenses accumulate in the retrieval layer. Vector database hosting, embedding model API calls, and re-indexing pipelines add up faster than teams expect when query volume climbs. Retrieval quality also degrades silently if your chunking strategy is poor or your index grows without regular maintenance. That means you need dedicated engineering time to monitor and tune the pipeline, which is a cost that rarely appears in initial project estimates.

Fine-tuning gives you consistent, low-latency outputs that don't depend on a retrieval step. For tasks like structured data extraction, brand-specific content generation, or domain-specific classification, a well-trained model will outperform a RAG system running on the same base model. Response quality feels tighter because the behavior is baked into the weights, not assembled at runtime from retrieved chunks.

Your costs are front-loaded but substantial. Curating a high-quality training dataset is the most time-intensive part of the process, and most teams underestimate it by a wide margin. Beyond data preparation, you're paying for GPU compute during training runs, evaluation infrastructure, and the ML expertise required to monitor for overfitting and regression. When your domain shifts and you need to retrain, those costs repeat in full, which makes fine-tuning significantly more expensive to maintain over a product's full lifecycle than RAG for most teams.

No single framework fits every team, but the llm fine tuning vs rag decision becomes much clearer when you start from two concrete questions: how often does your knowledge change, and what specific behavior do you need the model to produce? Your answers to those two questions will eliminate one option in most situations and point you directly toward where to invest your engineering resources before you write a line of code.

If the information you have is changing all the time, like every month, it's usually best to start with RAG. This is because things like internal documents, lists of products, support articles, and rules that need to be followed are always being updated. It's not practical or cost-effective to rebuild a detailed model every time one of these things changes. RAG allows you to make these changes directly in your vector store, and you'll see the changes in how the model responds, all without having to touch the model itself or run a new training session. This way, you can keep your model up to date without a lot of extra work.

Stable knowledge domains are a different story entirely. Medical coding logic, legal contract language, and brand tone guidelines change rarely, if ever, and those use cases benefit from a model that has internalized the patterns deeply rather than retrieving them from an external store on every request. When your domain fits that description, fine-tuning delivers more consistent and lower-latency results than a retrieval pipeline will.

Your primary task type matters just as much as update frequency. Use this decision table to orient your thinking before committing to either architecture:

| Primary task | Recommended approach |

|---|---|

| Question answering over current documents | RAG |

| Consistent structured output (forms, reports) | Fine-tuning |

| Knowledge lookup with source attribution | RAG |

| Domain-specific tone or writing style | Fine-tuning |

| Real-time data summarization | RAG |

| Classification with fixed categories | Fine-tuning |

When deciding between RAG and fine-tuning, it's essential to consider the type of task you're working with. If your task involves recalling facts from sources that change over time, RAG is likely the way to go. On the other hand, if your task requires applying a learned reasoning pattern or output schema consistently, regardless of the input, fine-tuning is probably a better fit. But what if your task doesn't fit neatly into one category? What if it spans both columns, requiring both the recall of evolving facts and the application of consistent reasoning patterns? In that case, you'll need to dig deeper before making a decision. You'll want to keep reading and exploring your options to determine the best approach for your specific task. By taking the time to carefully consider your task's requirements, you can make an informed decision and choose the path that will lead to the best results.

When it comes to fine-tuning and RAG, the decision isn't always a simple either-or situation. There are times when your project needs to have consistent behavior and also be able to access the latest information. In these cases, choosing one approach over the other means you have to make sacrifices on things that really matter to your users. But what if you could use both fine-tuning and RAG together? By layering these two approaches, each one can handle the problems it's best suited for, rather than trying to force one approach to do everything. This combined architecture can help you get the best of both worlds.

The clearest signal is when you need a model that responds in a domain-specific style while also pulling accurate, current information from a live knowledge base. A medical documentation tool, for example, might need a fine-tuned model that always produces structured clinical notes in a specific format, while simultaneously retrieving the latest drug interaction guidelines from an indexed document store. Neither approach alone covers both requirements without significant degradation in either quality or freshness.

When it comes to customer support systems, there's another important thing to consider. The model has to sound like it's coming from the same brand, and it has to follow certain rules for escalating issues. Fine-tuning is really good at handling this, but it's not so great at keeping up with things that change a lot, like product features and prices. That's where RAG comes in - it's a way to get the model to answer questions about the latest information without having to rebuild the whole system every time something changes. By using fine-tuning for the behavior and RAG for the knowledge, you can keep both of these things up to date without a lot of hassle. This way, you can make sure your customer support system is always consistent and always has the latest information, which is really important for providing good service.

When you're setting up a system that combines different parts, you first need to train the main model to understand the patterns of thought, the way things are presented, or the tone that stays the same over time. After this main model is up and running, you connect it to a special pipeline called RAG that finds the right information at the moment it's needed. The trained model then uses what it has learned to work with whatever information the pipeline brings up, which means you get organized and consistent results that are based on the latest information, rather than having to choose between different options. This way, you can have the best of both worlds: a system that gives you reliable and consistent outputs, and also uses the most current information available.

Having two systems to manage can be a bit of a challenge. You've got a trained model and a live retrieval pipeline, both of which need to be monitored, debugged, and updated regularly. This can add some extra work to your plate, but it's worth it if you want to avoid having a product that gives outdated answers or inconsistent results when things get busy. If you need a system that's both accurate and reliable, using a combined architecture can help you achieve that without having to compromise on either front. It's all about finding a balance and making sure that the extra complexity is justified by the benefits it brings.

So, when it comes to deciding between fine-tuning and RAG, there are two main things to consider: how often your data changes and what kind of behavior you need from your model. If your knowledge base is constantly being updated and it's important to know where the information is coming from, then RAG is probably the way to go. On the other hand, if you need your model to learn specific patterns of reasoning, produce consistent output, or capture a particular tone that's specific to a certain domain, then fine-tuning is a better choice. But what if your project needs both of these things? In that case, using a combined architecture can give you the best of both worlds, covering all your bases in a way that neither approach can on its own.

So, before you decide on a path, make sure you know what you're trying to do and how you'll be updating your data. Being clear about these things will help you avoid making costly mistakes that can be really hard to fix later on. It's way easier to prevent problems from happening in the first place than it is to try to fix them after you've already started building. Take the time to think things through and you'll save yourself a lot of trouble in the long run. If you're still weighing your options or need a technical partner to build the right system for your specific requirements, talk to the AI development team at Brilworks and get the architecture right from day one.

LLM fine tuning vs RAG comes down to how models are improved. Fine tuning updates the model by training it on new data, while RAG retrieves relevant external data at runtime without changing the model itself.

In the context of LLM fine tuning vs RAG, fine tuning is better when you need the model to learn domain-specific behavior. RAG is more suitable when you need up-to-date information or want to avoid retraining the model frequently.

Between LLM fine tuning vs RAG, fine tuning is usually more expensive due to training costs, infrastructure, and maintenance. RAG is often more cost-effective since it relies on retrieving data instead of retraining models.

For real-time use cases, LLM fine tuning vs RAG depends on the requirement. RAG is often preferred for dynamic, real-time data access, while fine tuning works well for consistent, predefined outputs.

Yes, LLM fine tuning vs RAG is not always an either-or decision. Many applications combine both approaches to achieve better performance, using fine tuning for behavior and RAG for accessing fresh or external data.

Get In Touch

Contact us for your software development requirements

You might also like

Get In Touch

Contact us for your software development requirements